Live audio translation. Entirely on your Mac.

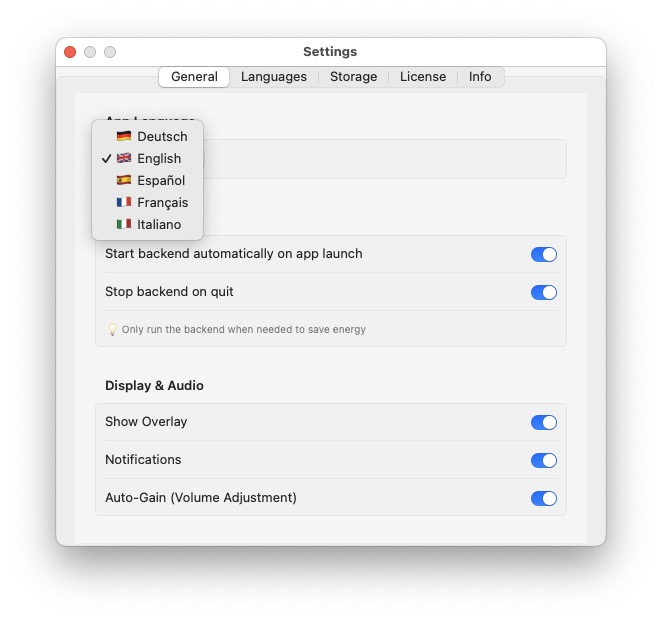

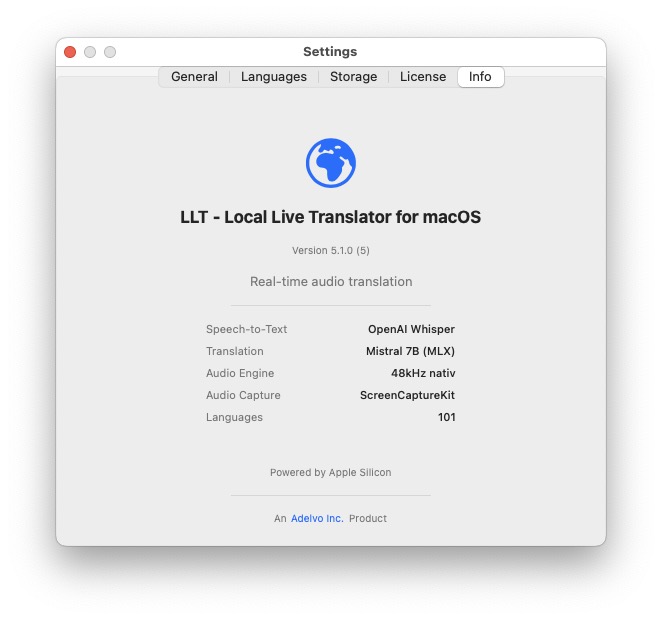

Whisper transcribes. Mistral 7B translates. Both run locally on Apple Silicon — no cloud, no API keys, no data leaves your machine. Pick any audio source, choose a target language, and go.

Whisper transcribes. Mistral 7B translates. Both run locally on Apple Silicon — no cloud, no API keys, no data leaves your machine. Pick any audio source, choose a target language, and go.

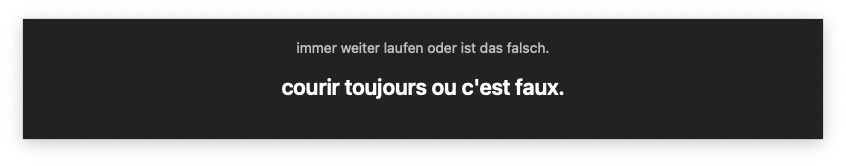

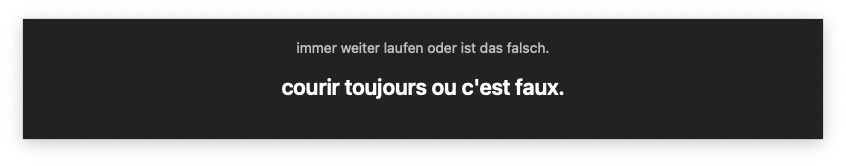

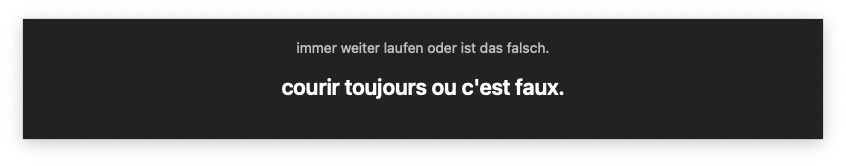

Floating overlay shows original text and translation in real time

Full session transcripts saved as TXT with timestamps

Export subtitles in SRT format for video post-production

Hear translations read aloud via macOS system voice

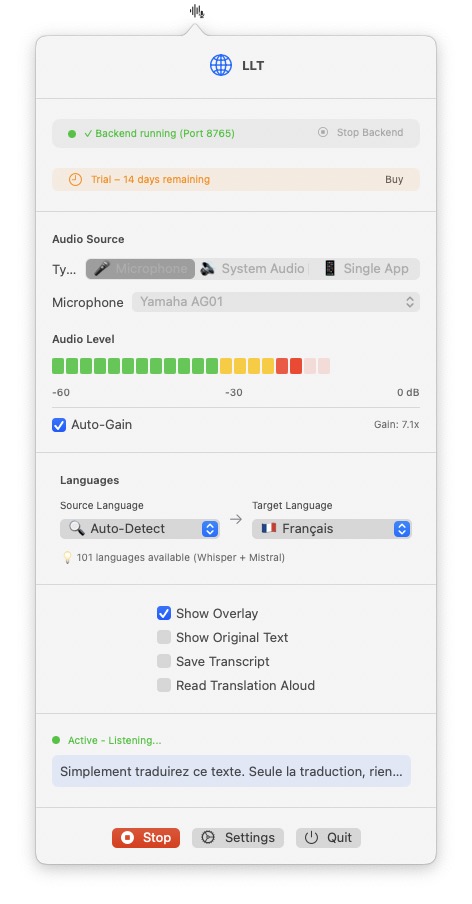

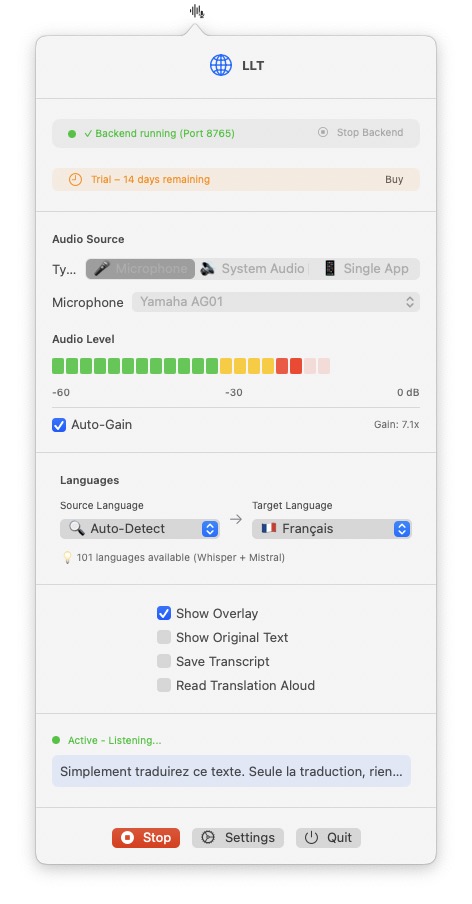

Choose your audio source and LLT captures it. WebRTC, Teams, a mic on a conference table — it doesn't matter. If your Mac can hear it, LLT can translate it.

Any hardware mic, USB mic, or audio interface. Select your device from the dropdown. Put a mic on a table and translate a meeting room in real time.

Capture audio from one specific app — Zoom, Teams, Chrome, Discord, FaceTime, or any other app. Only that app's audio gets translated, nothing else.

Capture all system audio at once. Everything playing on your Mac gets transcribed and translated. Useful as a catch-all for multi-source scenarios.

The backend starts with the app and runs a Python server on localhost. Audio is captured, chunked, sent via WebSocket, transcribed by Whisper, translated by Mistral, and displayed as an overlay — all without leaving your Mac.

Mic, app, or system audio → 3-second chunks via WebSocket to local backend

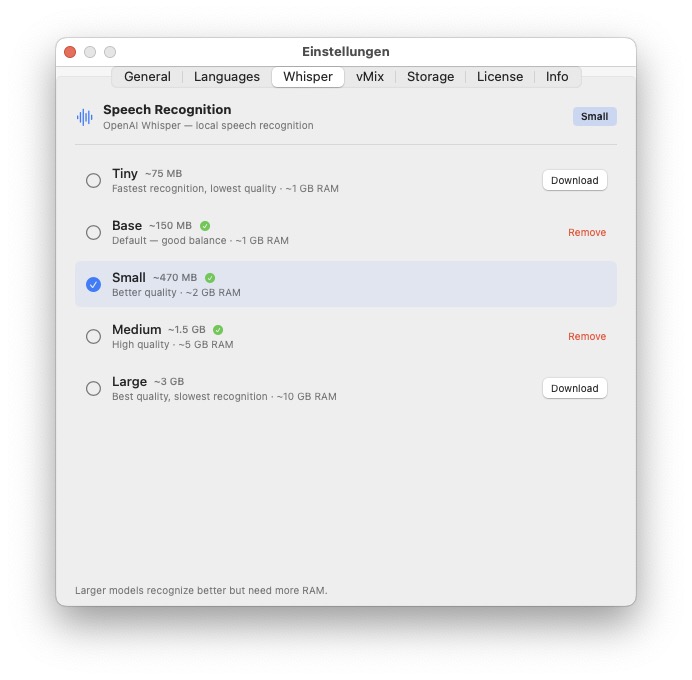

Speech-to-text with auto language detection. 5 models from tiny (~75 MB) to large (~3 GB) — choose in Settings > Whisper. Runs on Neural Engine.

Mistral 7B Instruct (4-bit MLX) translates transcribed text to target language

Floating overlay with original + translation (auto-hides after 6s), optional text-to-speech, and full transcript save as TXT or SRT subtitles.

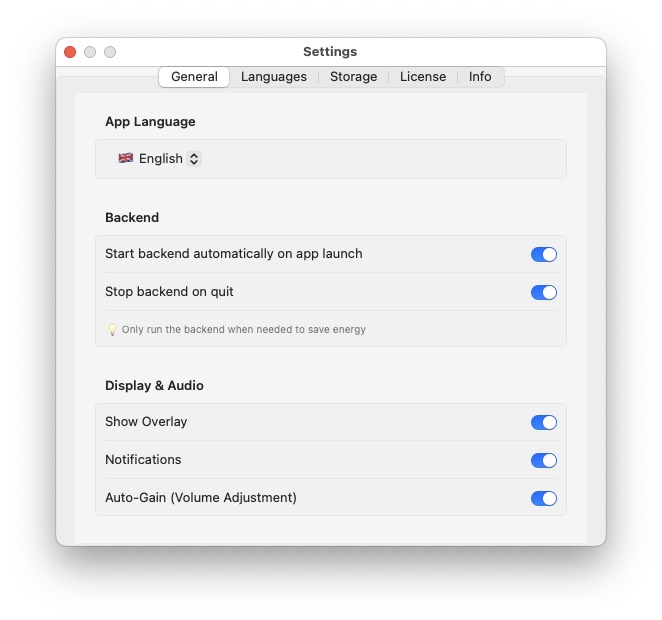

LLT is a menu bar app — no dock icon, no window clutter. Left-click opens the control panel, right-click shows status and quit. The backend starts with the app (or on demand), but translation only begins when you press Start.

Backend auto-starts with the app (configurable) and auto-stops on quit. Loads your selected Whisper model + Mistral on startup. Status visible in the control panel and menu bar icon.

Backend running ≠ translating. You manually press Start when you need translation. This keeps resource usage minimal until you actually need it — no background processing when idle.

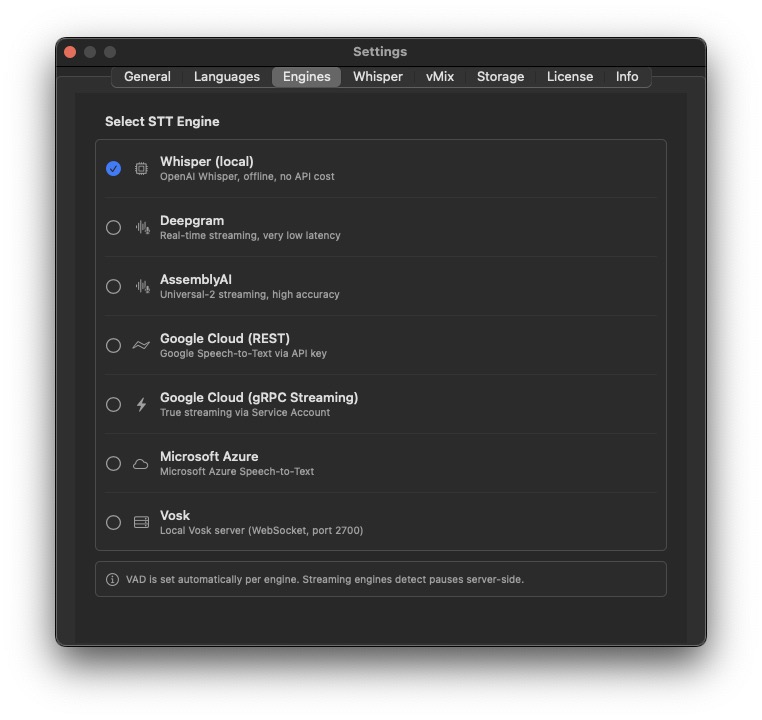

Each engine pairs a speech-to-text source with a translation provider. Some run fully local, some call the cloud directly from the app, and some stream through the local Python backend. The matrix shows which engines need the backend running.

| Engine | STT | Translation | Backend? |

|---|---|---|---|

| Whisper | Whisper (local) | Mistral (local) | yes |

| Google REST | Google STT (direct) | Google Translate (direct) | no |

| Google gRPC | Google gRPC Streaming (via backend) | Google Translate (direct) | yes |

| Deepgram | Deepgram Streaming (direct) | Google Translate (direct) | no |

| AssemblyAI | AssemblyAI Streaming (direct) | Google Translate (direct) | no |

| Azure | Azure STT + Translation (direct, one call) | Azure | no |

| Vosk | Vosk (local, via backend) | Mistral (local) | yes |

Google gRPC in detail: audio → Python backend → Google gRPC STT (true streaming, no VAD needed) → backend returns the recognized text (skipTranslation: true, so Mistral is skipped) → the app calls Google Translate REST directly from Swift (same API key) → original + translation are displayed.

Requirements: backend running (for gRPC) · google-cloud-speech installed in the Python venv · Service Account JSON · Google API key (for Translate). Restart the backend after enabling.

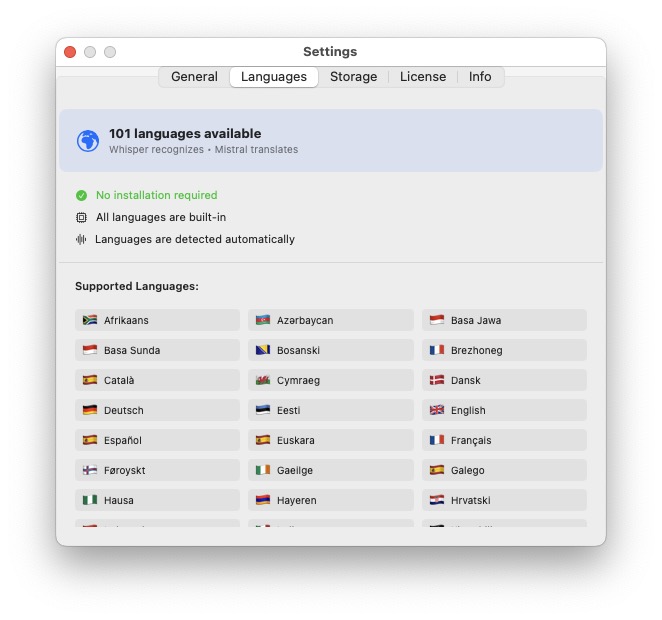

Whisper detects the source language automatically. Mistral translates to any of 101 target languages. Set source to "Auto" and just let it figure out what's being spoken.

Afrikaans, Albanian, Amharic, Arabic, Armenian, Assamese, Azerbaijani, Bashkir, Basque, Belarusian, Bengali, Bosnian, Breton, Bulgarian, Burmese, Cantonese, Catalan, Chinese, Croatian, Czech, Danish, Dutch, English, Estonian, Faroese, Finnish, French, Galician, Georgian, German, Greek, Gujarati, Haitian Creole, Hausa, Hawaiian, Hebrew, Hindi, Hungarian, Icelandic, Indonesian, Irish, Italian, Japanese, Javanese, Kannada, Kazakh, Khmer, Korean, Lao, Latin, Latvian, Lingala, Lithuanian, Luxembourgish, Macedonian, Malagasy, Malay, Malayalam, Maltese, Māori, Marathi, Mongolian, Nepali, Norwegian, Nynorsk, Occitan, Pashto, Persian, Polish, Portuguese, Punjabi, Romanian, Russian, Sanskrit, Serbian, Shona, Sindhi, Sinhala, Slovak, Slovenian, Somali, Spanish, Sundanese, Swahili, Swedish, Tagalog, Tajik, Tamil, Tatar, Telugu, Thai, Tibetan, Turkish, Turkmen, Ukrainian, Urdu, Uzbek, Vietnamese, Welsh, Yiddish, Yoruba

LLT runs AI models on your Mac. This requires Apple Silicon and enough RAM for the models.

macOS 13 (Ventura) or newer. Apple Silicon (M1, M2, M3, M4 — any variant). 8 GB RAM minimum (base model), 16 GB recommended (medium/large). Whisper models need 1–10 GB RAM depending on size. Python 3.11+ for the backend.

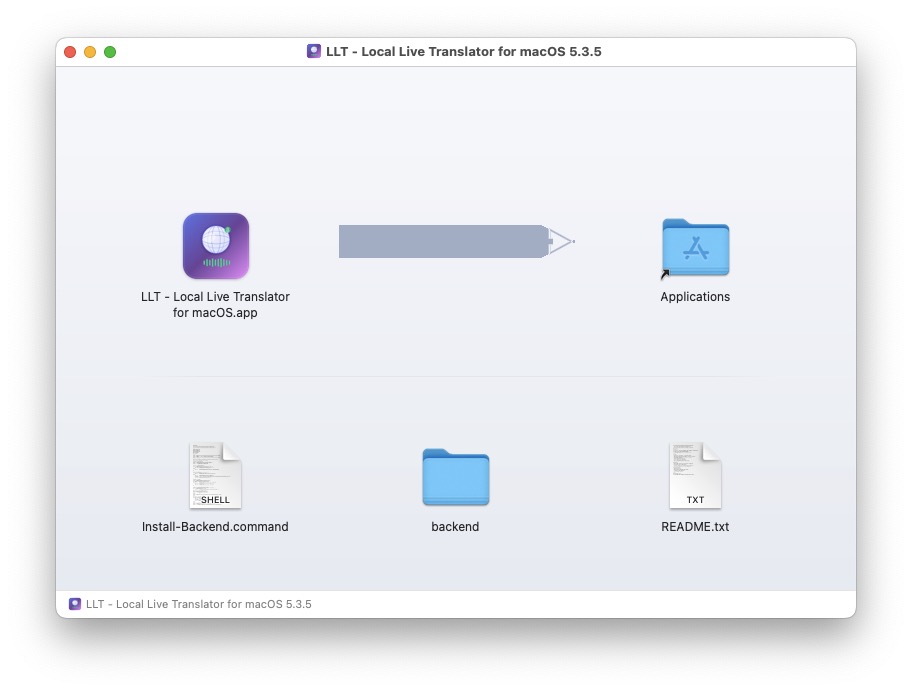

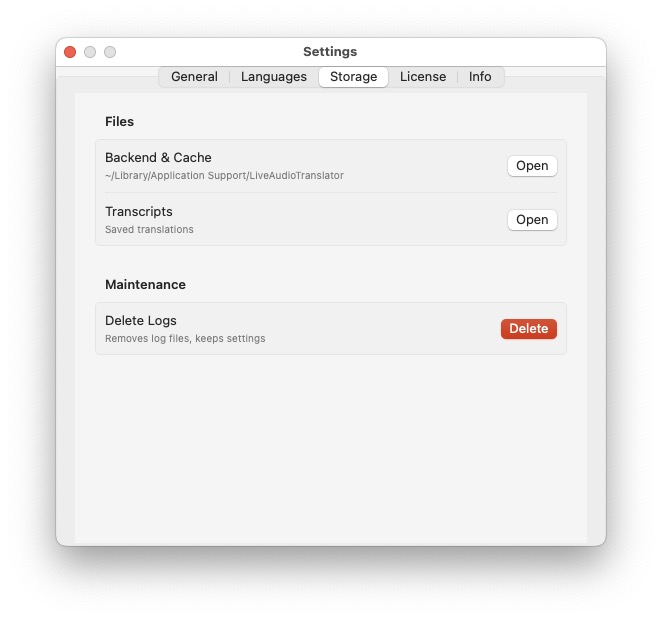

DMG with the app + Install-Backend.command script. The script creates a Python venv, installs Whisper, MLX, Mistral, and all dependencies. Choose your Whisper model during install (tiny to large) — change anytime in Settings > Whisper. One-time setup.

🚀 Launch Price — $49 after launch

Version 1.2.1 — 14 days full use, then license required

Requires Apple Silicon Mac (M1/M2/M3/M4) with 16 GB+ RAM. Backend installs automatically. Models download on first run (~4 GB).

LLT — Local Live Translator for macOS is part of the Adelvo family of professional media tools.

FLAGSHIP

FLAGSHIPProfessional vMix rundown & automation — timeline, call sheets, Stream Deck export, 20+ languages.

Route app audio, mic, webcam & line-in to 4 stereo outputs with EQ, delay, pan.

Audio compressor for browser tabs — fixes volume differences on social media and video pages.

Whisper + Mistral running locally on Apple Silicon. Just works.